Mike Qaissaunee, a Professor of Engineering and Technology at Brookdale Community College in Lincroft, New Jersey, shares his experiences and perspectives on integrating new technologies in and approaches to teaching and learning. ~ Subscribe to this Blog

Wednesday, December 22, 2010

More Linux Resources

Tuesday, December 21, 2010

The Birth of Spintronics

Spintronics: A New Way To Store Digital Data:

Scientists at the University of Utah have taken an important step toward the day when digital information can be stored in the spin of an atom's nucleus, rather than as an electrical charge in a semiconductor.

The scientists' setup requires powerful magnets and can only be operated at minus 454 degrees Fahrenheit, so don't expect to see spin memory on the shelf at a computer store anytime soon.

Christoph Boehme, an associate professor at the University of Utah, says the most important thing he and his team have done is show that it's possible to store information in spin and read it rather easily.

Here's how they did it: First, they used a strong magnetic field to make sure all their atoms were pointing in the same direction. Then they measured which way the nucleus of an atom was spinning. Physicists don't talk about spinning clockwise or counterclockwise — they call the spins either up or down.

"This up and down can now represent information," says Boehme. "An up means a one, and a down means a zero."

Storing and manipulating these zeroes and ones — bits, in computer parlance — is at the heart of how computers work. Today, those zeroes and ones are stored using electric charge — positive or negative. In the future, things might be different.

"Instead of electronics, people want to use spins and build spintronics, and if you do so, you need to be able to store information," says Boehme.

Data Encryption, the EFF and Code Breaking

An interesting history of DES - the Data Encryption Standard - and the efforts of the Electronic Frontier Foundation to demonstrate the inherent weaknesses in DES. At the time. DES was the federal standard for encryption of all non-classified data. It's interesting that the first crack was demonstrated as early as 1997, but a replacement - the Advanced Encryption Standard (AES) - was not approved until mid 2002.

EFF DES cracker - Wikipedia, the free encyclopedia:

In cryptography, the EFF DES cracker (nicknamed "Deep Crack") is a machine built by the Electronic Frontier Foundation (EFF) in 1998 to perform a brute force search of DES cipher's key space — that is, to decrypt an encrypted message by trying every possible key. The aim in doing this was to prove that DES's key is not long enough to be secure.

DES uses a 56-bit key, meaning that there are 256 possible keys under which a message can be encrypted. This is exactly 72,057,594,037,927,936, or approximately 72 quadrillion, possible keys. When DES was approved as a federal standard in 1976, a machine fast enough to test that many keys in a reasonable amount of time would have cost an unreasonable amount of money to build.

The DES challenges

Since DES was a federal standard, the US government encouraged the use of DES for all non-classified data. RSA Security wished to demonstrate that DES's key length was not enough to ensure security, so they set up the DES Challenges in 1997, offering a monetary prize. The first DES Challenge was solved in 96 days by the DESCHALL Project led by Rocke Verser in Loveland, Colorado. RSA Security set up DES Challenge II-1, which was solved by distributed.net in 41 days in January and February 1998.

In 1998, the EFF built Deep Crack for less than $250,000.[1] In response to DES Challenge II-2, on July 17, 1998, Deep Crack decrypted a DES-encrypted message after only 56 hours of work, winning $10,000. This was the final blow to DES, against which there were already some published cryptanalytic attacks. The brute force attack showed that cracking DES was actually a very practical proposition. For well-endowed governments or corporations, building a machine like Deep Crack would be no problem.

Six months later, in response to RSA Security's DES Challenge III, and in collaboration with distributed.net, the EFF used Deep Crack to decrypt another DES-encrypted message, winning another $10,000. This time, the operation took less than a day — 22 hours and 15 minutes. The decryption was completed on January 19, 1999. In October of that year, DES was reaffirmed as a federal standard, but this time the standard recommended Triple DES (also referred to as 3DES or TDES).

The small key-space of DES, and relatively high computational costs of triple DES resulted in its replacement by AES as a Federal standard, effective May 26, 2002.Photo German-Dutch Enigma machine by Bogdan Migulski - http://flic.kr/p/4yfuYE

Monday, December 20, 2010

Things the Internet Has Really Killed

He then offers his own list of things the Internet has killed:

- Government secrecy.

- Opaque markets.

- Central control.

- Power elites.

- Borders.

- Inefficiency.

- Ignorance.

- Newsweek.

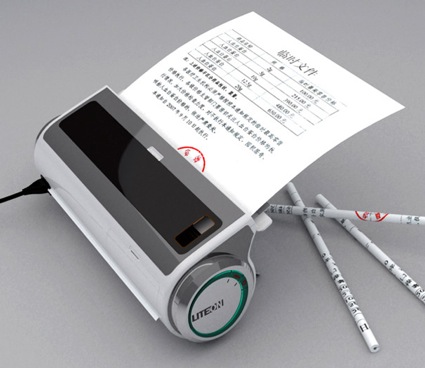

Recycling - Turning Paper into Pencils

This is very cool. Not sure where you get the lead and the glue, but it's a really great innovation. We've got to get our kids excited about STEM careers, so we can be the ones churning out these ideas!

I imagine these pencils would be a great marketing tool and popular giveaway at conferences.

P&P Office Waste Paper Processor Turns Paper into Pencils :

The P&P Office Waste Paper Professor turns paper into pencils when you add the lead, glue, and power. It also includes a pencil sharpener on the side.photo via yankodesign

Great Presentation from Hans Rosling

Here's another great presentation from Hans Rosling. It would be great if we had the resources in academia to develop content of this quality.

Free E-Book: Web Tools for Educators

Here is the book. Download it, pass it out, share it with your colleagues, administrators, teammates.

Super Book Of Web Tools For Educators -

The 10 Most Destructive Hacker Attacks In The Past 25 Years

With the recent wikileaks-related DoS and DDoS attacks, here a great rundown of hacker attacks from the last 25 years. Alsom, here's a great rundown of the Wikileaks attacks from Sam Bowne.

The 10 Most Destructive Hacker Attacks In The Past 25 Years:

The Daily Beast runs through 10 of the most infamous hacks, worms, and DDoS takedowns in the last 25 years, from an computer virus named for a stripper to the mysterious 102nd caller at Los Angeles’s KIIS-FM.

Sunday, December 19, 2010

Friday, December 17, 2010

Is Your Blog Mobile Ready?

Google's blogging service just added mobile templates in the beta version of their service draft.blogger.com. The mobile version is designed for webkit-based browsers, so it should render properly on iOS and Android devices.

Here's a screenshot of my blog on the desktop,

and a couple screenshots of the mobile version - from my iPhone

Blogger in Draft: New mobile templates for reading on the go

Sir Ken Robinson on Changing Education Paradigms

Thursday, December 16, 2010

Google's Chrome OS - The Network Computer Lives Again

- more ubiquitous broadband,

- better hardware,

- Google's resources behind it,

- an ad-supported model, and

- most importantly - cloud-based apps have gained mainstream acceptance, so there's no barrier to adoption.

In previous iterations, the vision was stymied by the lack of reliable broadband connectivity everywhere, and effectively hijacked by Microsoft. Will the Windows giant, this time around, lose out to the approach conceived by Sun, Oracle and Google – a stripped-down device with long battery life and minimal local storage or apps, connecting for its data and services to the cloud (which we used to call the server)? Google pitched its latest definition of the thin client, with the launch of Chrome OS and a next generation netbook

...

The thin client reinvented

Back in 1997, Oracle chief Larry Ellison and Microsoft‟s Bill Gates went head-to-head on stage at a technology conference in Paris, with Ellison unleashing the new approach to computing he had cooked up with Scott McNealy, then head of Sun, and designed to kill the traditional "fat" PC with its growing software, memory and storage burden. Oracle and Sun floated the concept of thin, internet-connected clients and were joined later by Google, with its broad vision of putting every app and every piece of data and content in the cloud, to be accessed by a widening range of always-connected, slimline and mobile gadgets.

“A PC is a ridiculous device. What the world really wants is to plug into a wall to get electronic power, and plug in to get data,” said Ellison at the time.

Back then, the client device was called the "network computer", but it largely failed because of user wariness of letting their data and apps out of their own hands (still a major factor, which sees smartphones gaining ever larger memories), and because always-on connectivity was not available, so the concept was largely chained to the desk. Ellison said at the launch of the network computer: "We'll see hundreds of thousands of machines shipped in the first year. Very quickly, we'll see entire industry move to this model. By the year 2000, NCs will outsell personal computers."

This prediction worked out like most such forecasts for the latest big thing in devices.

Richard Stallman On Hacking

The hacking community developed at MIT and some other universities in the 1960s and 1970s. Hacking included a wide range of activities, from writing software, to practical jokes, to exploring the roofs and tunnels of the MIT campus. Other activities, performed far from MIT and far from computers, also fit hackers' idea of what hacking means...

It is hard to write a simple definition of something as varied as hacking, but I think what these activities have in common is playfulness, cleverness, and exploration. Thus, hacking means exploring the limits of what is possible, in a spirit of playful cleverness. Activities that display playful cleverness have "hack value".

...

Yet when I say I am a hacker, people often think I am making a naughty admission, presenting myself specifically as a security breaker. How did this confusion develop?

Around 1980, when the news media took notice of hackers, they fixated on one narrow aspect of real hacking: the security breaking which some hackers occasionally did. They ignored all the rest of hacking, and took the term to mean breaking security, no more and no less. The media have since spread that definition, disregarding our attempts to correct them. As a result, most people have a mistaken idea of what we hackers actually do and what we think.

You can help correct the misunderstanding simply by making a distinction between security breaking and hacking—by using the term 'cracking' for security breaking. The people who do it are 'crackers'. Some of them may also be hackers, just as some of them may be chess players or golfers; most of them are not.

Wednesday, December 15, 2010

Basic Linux Command-line Tips and Tricks - Part 2

21. dpkg -l : To get a list of all the installed packages.

22. Use of ‘ >‘ and ‘ >>‘ : The ‘ > ‘ symbol ( input redirector sign) can be used to add content to a file when used with the cat command. Whereas ‘ >> ‘ can be used to append to a file. If the ‘ >> ‘ symbol is not used and content is added to a file using only the ‘>’ symbol the previous content of the file is deleted and replaced with the new content.

e.g: $ touch text (creates an empty file)

$ cat > text

This is text’s text. ( Save the changes to the file using Ctrl +D)

$cat >> text

This is a new text. (Ctrl + D)

Output of the file:

This is text’s text.

This is a new text.

23. To count the number of users logged in : who |wc –l

24. cat: The cat command can be used to trickly in the following way:

- To count no. of lines from a file : cat |wc -l

- To count no. of words from a file : cat |wc -w

- To count no. of characters from a file : cat |wc –c

25. To search a term that returns a pattern: cat |grep [pattern]

26. The ‘tr’ command: Used to translate the characters of a file.

tr ‘a-z’ ‘A-Z’ text1 : The command for example is used to translate all the characters from lower case to upper case of the ‘text’ file and save the changes to a new file ‘text1′.

27. File permission using chmod: ‘chmod’ can be used directly to change the file permission of files in a simple way by giving the permission for root, user and others in a numeric form where the numeric value are as follows:

r(read-only)=>4

w(write)=>2

x(executable)=>1

e.g. chmod 754 text will change the ownership of owner to read, write and executable, that of group to read and executable and that of others to read only of the text file.

28. more: It is a filter for paging through text one screenful at a time.

Use it with any of the commands after the pipe symbol to increase readability.

e.g. ls -ll |more

29. cron : Daemon to execute scheduled commands. Cron enables users to schedule jobs (commands or shell scripts) to run periodically at certain times or dates.

1 * * * * echo “hi” >/dev/tty1 displays the text “hi” after every 1 minute in tty1

.—————- minute (0 – 59)

| .————- hour (0 – 23)

| | .———- day of month (1 – 31)

| | | .——- month (1 – 12) OR jan,feb,mar,apr …

| | | | .—– day of week (0 – 7) (Sunday=0 or 7) OR sun,mon,tue,wed,thu,fri,sat

* * * * * command to be executed

Source of example: Wikipedia

30. fsck: Used for file system checking. On a non-journaling file system the fsck command can take a very long time to complete. Using it with the option -c displays a progress bar which doesn’t increase the speed but lets you know how long you still have to wait for the process to complete.

e.g. fsck -C

31. To find the path of the command: which command

e.g. which clear

32. Setting up alias: Enables a replacement of a word with another string. It is mainly used for abbreviating a system command, or for adding default arguments to a regularly used command

e.g. alias cls=’clear’ => For buffer alias of clear

33. The du (disk usage) command can be used with the option -h to print the space occupied in human readable form. More specifically it can be used with the summation option (-s).

e.g. du -sh /home summarizes the total disk usage by the home directory in human readable form.

34. Two or more commands can be combined with the && operator. However the succeeding command is executed if and only if the previous one is true.

e.g. ls && date lists the contents of the directory first and then gives the system date.

35. Surfing the net in text only mode from the terminal: elinks [URL]

e.g: elinks www.google.com

Note that the elinks package has to be installed in the system.

36. The ps command displays a great more deal of information than the kill command does.

37. To extract a no. of lines from a file:

e.g head -n 4 abc.c is used to extract the first 4 lines of the file abc.c

e.g tail -n 4 abc.c is used to extract the last 4 lines of the file abc.c

38. Any changes to a file might cause loss of important data unknowingly. Hence Linux creates a file with the same name followed by ~ (Tilde) sign without the recent changes. This comes in really handy when playing with the configuration files as some sort of a backup is created.

39. A variable can be defined with an ‘=’ operator. Now a long block of text can be assigned to the variable and brought into use repeatedly by just typing the variable name preceded by a $ sign instead of writing the whole chunk of text again and again.

e.g ldir=/home/my/Desktop/abc

cp abcd $ldir copies the file abcd to /home/my/Desktop/abc.

40. To find all the files in your home directory modified or created today:

e.g. find ~ -type f -mtime 0

Basic Linux Command-line Tips and Tricks - Part 1

- Everything in Linux is a file including the hardware and even the directories.

- # : Denotes the super(root) user

- $ : Denotes the normal user

- /root: Denotes the super user’s directory

/home: Denotes the normal user’s directory.- Switching between Terminals

Ctrl + Alt + F1-F6: Console login

Ctrl + Alt + F7: GUI login- The Magic Tab: Instead of typing the whole filename if the unique pattern for a particular file is given then the remaining characters need not be typed and can be obtained automatically using the Tab button.

- ~(Tilde): Denotes the current user’s home directory

- Ctrl + Z: To stop a command that is working interactively without terminating it.

- Ctrl + C: To stop a command that is not responding. (Cancellation).

- Ctrl + D: To send the EOF( End of File) signal to a command normally when you see ‘>’

- Ctrl + W: To erase the text you have entered a word at a time.

- Up arrow key: To redisplay the last executed command. The Down arrow key can be used to print the next command used after using the Up arrow key previously.

- The history command can be cleared using a simple option –c (clear).

- cd : The cd command can be used trickily in the following ways:

cd : To switch to the home user

cd * : To change directory to the first file in the directory (only if the first file is a directory)

cd .. : To move back a folder

cd - : To return to the last directory you were in- Files starting with a dot (.) are a hidden file.

- To view hidden files: ls -a

- ls: The ls command can be use trickily in the following ways:

ls -lR : To view a long list of all the files (which includes directories) and their subdirectories recursively .

ls *.* : To view a list of all the files with extensions only.- ls -ll: Gives a long list in the following format

drwxr-xr-x 2 root root 4096 2010-04-29 05:17 bin where

drwxr-xr-x : permission where d stands for directory, rwx stands for owner privilege, r-x stands for the group privilege and r-x stands for others permission respectively.

Here r stands for read, w for write and x for executable.

2=> link count

root=> owner

root=> group

4096=> directory size

2010-04-29=> date of creation

05:17=> time of creation

bin=> directory file(in blue)

The color code of the files is as follows:

Blue: Directory file

White: Normal file

Green: Executable file

Yellow: Device file

Magenta: Picture file

Cyan: link file

Red: Compressed file

File Symbol

-(Hyphen) : Normal file

d=directory

l=link file

b=Block device file

c=character device file

- Using the rm command: When used without any option the rm command deletes the file or directory ( option -rf) without any warning. A simple mistake like rm / somedir instead of rm /somedir can cause major chaos and delete the entire content of the /(root) directory. Hence it is always advisable to use rm command with the -i(which prompts before removal) option. Also there is no undelete option in Linux.

- Copying hidden files: cp .* (copies hidden files only to a new destination)

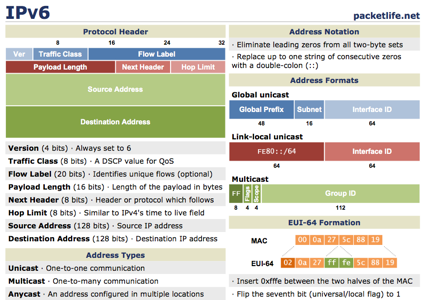

Networking Cheat Sheets

Some great resources available from Packet Life, including:

Protocols:

- BGP

- EIGRP

- First Hop Redundancy

- IEEE 802.11 WLAN

- IEEE 802.1X

- IPsec

- IPv4 Multicast

- IPv6

- IS-IS

- OSPF

- PPP

- RIP

- Spanning Tree

- a poster on IOS Interior Routing Protocols

Technologies:

Voice:

Miscellaneous:

Tuesday, December 14, 2010

Tom Friedman Discussing The World is Flat

The World is Flat 3.0 | MIT World:

Elaborating on his World is Flat thesis, Friedman describes how this new global order puts creative, entrepreneurial individuals in the driver’s seat, and poses distinct new challenges and opportunities.

The digital platform that connects Bangalore, Boston and Beijing enables users from any of these places to “plug, play, compete, connect and collaborate,” and is changing everything, says Friedman. He lists some basics to keep in mind: Whatever can be done, will be done, “and the only question left is will it be done by you or to you.”

Gingerbread and the Archos Tablet

Very true. I have some friends that have used it and don't really like it. iPad seems to be the winner on the market right now. I feel however, that Archos tablets will do really well once Android Gingerbread is released fully as it will have tablet support.Great comment! It's true that the current version of Android is not optimized for larger displays, such as a tablet. Even Google is cautioning tablet manufacturers to wait until Gingerbread. The Archos tablet does look promising.

CNN’s iPad App in a Sentence

From Ben Brooks Review: CNN’s iPad App:

I am sad to say that the CNN app is among the worst news apps released for the iPad.

Chrome OS Predictions

Former Googler, FriendFeed founder and Facebook-er turned investor Paul Buchheit just tweeted this zinger:

Prediction: ChromeOS will be killed next year (or “merged” with Android)

The Anti-Course: Learn By Doing

The anti-course: An instructional job aid:

Here’s a short video that shows how we can break our addiction to the course and move training closer to the job.

Monday, December 13, 2010

Samsung Galaxy Tab Review

Fairly favorable review overall, but strange dichotomy: Pro - Flash as a option; Con - Flash as an option. They give it 3 and a half out of 5 stars, but based on the review I don't think they're recommending anyone buy it.

With a retail price of $599 off-contract, the Galaxy Tab is $30 less than the comparable 16GB iPad, but we're not sure it's automatically a bargain. We’re really disappointed with the web browsing, Flash and video chat performance of the Tab and we’re still a little perplexed with the form factor of the device, especially since you pay only slightly less for what amounts to much less screen area. To really steal market share from Apple, the Galaxy Tab would need to have a larger screen, more dedicated apps, and keep its dual-camera setup all while maintaining or dropping its price point.

- Posted using BlogPress from my iPad

World's Best Presentations - 2010

Making the Case for Virtual Classrooms

Another great video from CommonCraft!

The Outlook for IT Jobs

Why IT Jobs Are Never Coming Back:

The combination of more automation, increased offshoring, and better global IT infrastructure has taken its toll on the U.S. IT profession, resulting in a net loss of 1.5 million corporate IT jobs over the last decade, according to recent research from IT consultancy and benchmarking provider The Hackett Group.

The barely bright side for the American IT worker is that the total number of annual job losses will diminish slightly in the coming years, down from a high of 311,000 last year to around 115,000 a year through 2014, according to Hackett which based its research on internal IT benchmarking data and publicly available labor numbers. The really bad news? It's unlikely that IT will contribute to new job creation in the foreseeable future. "To succeed over the next five years, companies need to understand how to reposition existing talent; jettison or rationalize current jobs that have no place in the leveraged organization; and source, develop and retain still others to fill the need for new skills, both offshore and in retained onshore staff,"

Teaching Our Students Entrepreneurship

Young Entrepreneurs Create Their Own Jobs:

THE lesson may be that entrepreneurship can be a viable career path, not a renegade choice — especially since the promise of “Go to college, get good grades and then get a job,” isn’t working the way it once did. The new reality has forced a whole generation to redefine what a stable job is.

“I’ve seen all these people go to Wall Street, and those were supposed to be the good jobs. Now they are out of work,” says Windsor Hanger, 22, who turned down a marketing position at Bloomingdale’s to work on HerCampus.com, an online magazine. “It’s not a pure dichotomy anymore that entrepreneurship is risky and other jobs are safe, so why not do what I love?”

Mr. Gerber argues that the tools to become an entrepreneur are more accessible than they’ve ever been. Thanks to the Internet, there are fewer upfront costs. A business owner can build a Web site, host conference calls, create slide presentations online through a browser, and host live meetings and Web seminars — all on a shoestring.

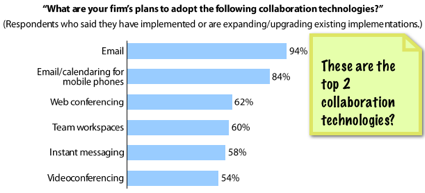

Chained to the Past: Collaboration and the Distributed Workforce

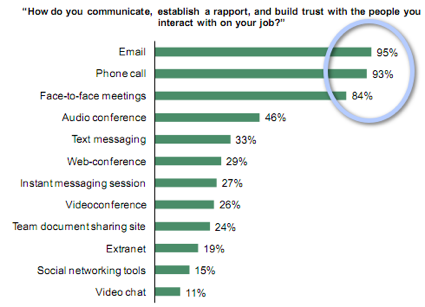

Pretty scary that in 2010 the top 2 "collaboration technologies" that businesses are looking to adopt are Email and Email/calendaring for mobile devices. The study was commissioned by Citrix Online, so I suppose they were hoping to see web and video conferencing at the top of the list. Alternatively, one could argue this demonstrates that there is room for growth.

Sadly, in spite of the emergence of social media, web conferencing, and robust collaboration tools, workers are still chained to 20th century standbys - Email, Phone, and Face-to-face. I think people use what they are comfortable with and what has worked in the past - most see abadoning these for "new" technologies as too risky.

Making Collaboration Work for the 21st Century's Distributed Workforce:

Last month we published an infographic on the international language of business based on a study that Citrix Online commissioned from Forrester Consulting. Today we're happy to launch the results of that study. The study yielded surprising findings related to generational and cultural working behaviors that impact how businesses communicate and collaborate in an increasingly dispersed workplace, and the implications for the future competitiveness of SMBs.

Key Findings

The study asked information workers of all ages in the United States, United Kingdom, France, Germany and Australia about their business communication habits.

Gen Y does not have the monopoly on technology use and social tools during the work day. Meanwhile, the older generation is getting with the program.

The younger you are, the less you value meetings - and pay attention.

Americans have more meetings - and pay more attention.

The in-person meeting is alive and well, but not necessarily effective.

In an era of multitasking, it's still considered rude in a meeting.

We still like to look each other in the eye.

- Why? To read body language, say 78%.

Usage among users of collaborative technologies is rising fast.

If you would like to download a copy of the report, you can find it posted in our Downloads section here.

- 64% of those who use social networking tools in business use them more than last year. Video chat, team document-sharing sites and web conferencing also experienced significant increases in usage, with 56%, 55% and 52% respectively.

Saturday, December 11, 2010

Final Nail in Microsoft Kin's Coffin

Kin Studio Closing On January 31st: Kin Will Really Die Now:

We thought it was weird when Microsoft killed Kin only to have Verizon oddly resurrect it. It doesn't matter anymore because on January 31, 2011, Microsoft's Kin Studio, the cloud that the Kin relies on to work, will close. Verizon will even give you a free 3G phone to replace your Kin.

Friday, December 10, 2010

FCC - 68 Percent of US Broadband Connections Aren't Really Broadband

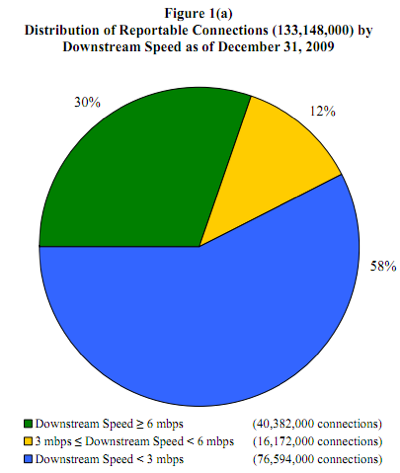

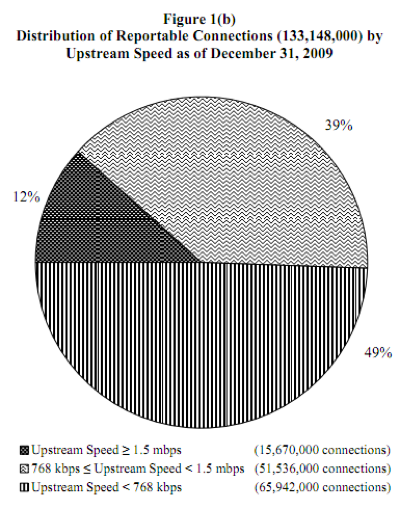

FCC report finds 68 percent of US broadband connections aren't really broadband:

As the FCC itself has made abundantly clear, the definition of "broadband" is an ever-changing one, and its latest report has now revealed just how hard it is for the US to keep up with those changes. According to the report, a full 68 percent of "broadband" connections in the US can't really be considered broadband, as they fall below the agency's most recent minimum requirement of 4 Mbps downstream and 1 Mbps upstream. Also notable, but somewhat buried in the report, are the FCC's findings on mobile broadband use. The agency found that mobile wireless service subscribers with mobile devices and "data plans for full internet access" grew a hefty 48% to 52 million in the second half of 2009, and that when you consider all connections over 200 kbps, mobile wireless is actually the leading technology at 39.4 percent, ahead of cable modems and ADSL at 32.4 and 23.3 percent, respectively. When it comes to connections over 3 Mbps, however, cable modems account for a huge 70 percent share.

Get the full report here.

The Death of Mass Transportation - Railways

Thomas Hawk Digital Connection » Blog Archive » Remember to Call Home

Thursday, December 09, 2010

Twitter In 5 Minutes A Day

How To Make Twitter Work For You In 5 Minutes A Day:

When I first started hearing about Twitter over a year ago I wasn’t sure what all the fuss was about. Now, a year later, I understand exactly what all the fuss is about!

Twitter is a genuinely awesome tool to help you grow your business and your personal network. By participating in Twitter for 5 minutes a day I have seen the following benefits within one year:

- I found an awesome business partner who is co-founder of my new start-up

- I raised awareness of my start-up Pluggio and made $12,000 revenue as a result

- I asked many questions about things I was struggling with and instantly got excellent answers

- I asked if anyone needed a contractor with my skill-set and instantly got a job

- I drove hundreds of users to the weekly tech podcast that I co-host (TechZing)

The Story of the Brooklyn Bridge

The Story of the Brooklyn Bridge: A Roebling Family Production:

The Brooklyn Bridge is one of the most famous landmarks in the Five Boroughs of New York City. For over a hundred years, it has been the main crossing-point of the East River for New Yorkers and Brooklynites, heading to each other’s part of town for work and play. Yet, in the scope of history, the Brooklyn bridge hasn’t been around that long at all. When its construction was finished in 1883, it was the biggest suspension-bridge in the United States, but the story behind its construction is one that is even more amazing that the structure that resulted from it. It took fourteen years, hundreds of men, cost one man his life, another man his mobility and thrust an unprepared housewife into the harrowing man’s world of engineering, construction and design, a world which she knew nothing about. This is the story of the Brooklyn Bridge.

...

The toll for crossing the bridge on the Opening Day was one penny. This was increased to three pennies for every day thereafter. 150,300 people walked across Brooklyn Bridge on its first day, and 1,800 vehicles drove across it! That’s a phenomenal amount, when you consider that the bridge was only opened to traffic at 5:00pm that afternoon!

The Lightfoil

Optical Wing Generates Lift from Light

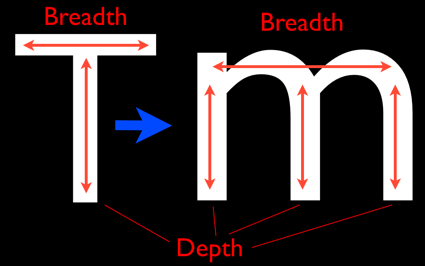

Physicists in the United States have demonstrated the optical analogue of an aerofoil--a "lightfoil" that generates lift when passing through laser light.

The demonstration, which comes more than a century after the development of the first airplanes, suggests that lightfoils could one day be used to maneuver objects in the vacuum of outer space using only the Sun's rays. "It's almost like the first stages of what the Wright brothers did," says lead author Grover Swartzlander, a physicist at the Rochester Institute of Technology, New York, whose study appeared December 5 in Nature Photonics. (Scientific American is part of Nature Publishing Group.)

The principle of a lightfoil is similar to that of an aerofoil: both require the pressure to be greater on one side than the other, which generates a force, or lift, in that direction. With an aerofoil, the pressure difference arises because air must pass faster over the longer, curved side to rejoin the air passing underneath.

With the lightfoil, the pressure comes from light rather than air. Such "radiation pressure" was theorized by physicists James Clerk Maxwell and Adolfo Bartoli in the late 19th century, and exists because photons impart momentum to an object when they reflect off or pass through it. It is the reason, for example, that comet tails always point away from the Sun--the Sun's rays push them that way.

Photo by rreis - http://flic.kr/p/4RWRo3

- Posted using BlogPress from my iPad

Wednesday, December 08, 2010

Learn Windows Phone 7 Programming in a Month

Great Windows Phone 7 programming resources from Jeff Blakenburg 31 Days of Windows Phone 7:

In the month of October 2010, I published an article every day on Windows Phone 7 development. Here's the list of topics I covered, in order by the day they were released:

Day #1: Project Template

Day #2: Page Navigation

Day #3: The Back Button Paradigm

Day #4: Device Orientation

Day #5: System Theming

Day #6: Application Bar

Day #7: Launchers

Day #8: Choosers

Day #9: Debugger Tips

Day #10: Input Scope (on-screen Keyboard)

Day #11: Accelerometer

Day #12: Vibration Controller

Day #13: Location Services

Day #14: Tombstoning

Day #15: Isolated Storage

Day #16: Panorama Control

Day #17: Pivot Control

Day #18: WebBrowser Control

Day #19: Push Notification API

Day #20: Map Control

Day #21: Silverlight Toolkit

Day #22: Apps vs. Games

Day #23: Trial Versions of Your App

Day #24: Embedding Fonts

Day #25: Talking to Existing APIs (like Twitter)

Day #26: Sharing Your App With Other Developers

Day #27: Windows Phone Marketplace

Day #28: Advertising SDK

Day #29: Animations

Day #30: Gestures

Day #31: Charting

Photo by louisvolant - http://flic.kr/p/8W8Yyx

- Posted using BlogPress from my iPad

How We Consume 34 Gigbytes of Data - a Day

America Hungry, Need Data:

According to a new study by the University of California, San Diego, from 1980 through 2008 the total number of bytes bitten by Americans has upped by 6% per year and now stands at an incredibly huge sounding 3.6 zettabytes. (Or one billion trillion bytes, if that's easier to imagine.)

...

Here we break down what 3.6 zettabytes--or 34 gigabytes a day--means in terms of daily consumption.

[Data via Bits]

Infographic: Rob Vargas

Are We Running Out of IPv4 Addresses?

Running out of IPv4 addresses:

This is a very interesting presentation given by John Curran, President and CEO of ARIN.

John goes into detail about the problems ARIN have faced trying to prepare ISPs for IPv6, backwards compatibility issues and predicts that in a years time, ARIN will run out of IPv4 addresses.

In a years time you should definitely turn your hand to IPv6 consulting

The presentation can be found in PDF form here: http://www.defcon.org/images/defcon-18/dc-18-presentations/Curran/DEFCON-18-Curran-IPv6.pdf

Tuesday, December 07, 2010

Cell Phone Use Visualized

How the World Is Using Cellphones [INFOGRAPHIC]

The infographic illustrates, among other things, the number of cellphones per capita in various countries, the rate of cellphone adoption in the U.S. during the past decade and the acceptability of certain behaviors regarding cellphone use.

- Posted using BlogPress from my iPad

The Rise Of The Gentleman Hacker

Michael Arrington ran into

two entrepreneurs that have made a ton of money by selling their companies in the last couple of years. They’re both working on a slew of new projects, and the way they’re doing it is fascinating.I think it's more about just building stuff that interests them and if anything comes of it great, if not - no biggie.

What does a person do after becoming fabulously wealthy?

...

But I’m hearing more and more about people who are simply setting up an office somewhere close to their multi-million dollar home in Silicon Valley or San Francisco, hiring a handful of hackers, and just building stuff to see what happens.

In many ways it’s analogous to the gentleman farmer – someone who farms, sorta, but doesn’t really worry about profit because they are independently wealthy.

Bring Back Unlimited Data

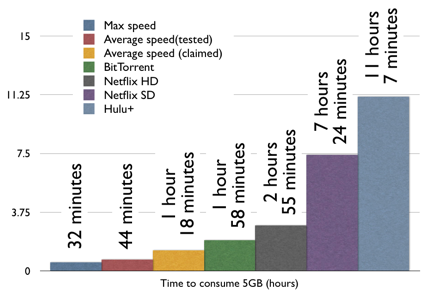

Using up your monthly 5 GB data allotment in less than a day - wow!

Verizon LTE Blows Through Monthly Data Cap in 32 Minutes:

Verizon's new 4G LTE network is so fast that you can use up your entire 5GB, $50 monthly allotment in 32 minutes.

I'm in the middle of testing Verizon's new LTE network, and the 2010-era speeds are soured by the 2005-era thinking on data plans. Verizon has priced LTE pretty much like 3G to encourage data sipping, not guzzling. As soon as you start using the latest high-bandwidth Internet services, your whole month's allotment can evaporate within a day.

My tests maxed out at an impressive 21Mbps. If you were downloading 5GB at that speed, it would only take you 32 minutes. Since the LTE network currently has almost nobody on it, I got average speeds around 15Mbps; Verizon estimates you'll be able to get around 8.5Mbps with a loaded network. But very few applications actually use those speeds consistently, so I also checked out some more common uses.

Downloading some files via BitTorrent, I registered 5.6Mbps, which could use up the cap in about two hours. Standard-definition Netflix video is kinder to your data cap; according to Netflix, they encode at 1500 kbps, so it'll take you 7.4 hours to burn through your monthly allotment. That's fewer than four movies.

Monday, December 06, 2010

More on the Kindle

The good:

I like it a lot. I was worried that I would not actually enjoy reading with it, or that it would be significantly inferior to real books. However, I do enjoy reading with it, and I think they have achieved their aim of making it so that it 'just disappears' like a good book does. It's a very small and light device, even with the leather cover I got for it (always best with a small child in the house), so it's in no way heavy or uncomfortable. It's relatively more expensive and fragile than a copy of The Economist or a paperback, so I don't sling it around quite so casually, and do try and remember to keep it out of reach of said small child, which makes it a little less worry-free than an actual book. On the other hand, it's much cheaper than an ipad or samsung galaxy or something like that, so it's not the end of the world if something happens to it, although it would be unfortunate to be without reading material for the time it took to replace it!

...

there are quite a few books available for it for *free* because they're old and out of copyright. Stuff you might get for 99 cents at a used book store as a paperback, but it's pretty cool to be able to just zip it right to the device, for nothing at all.

The bad:

The selection of material available for it is ok, but I'm just a bit disappointed - I thought offhand there were more books than there are. I regularly bump into things I might consider getting if they were available for it, but are not.

...

I find it somehow a bit monotonous to read everything in the same form factor and with the same fonts. It takes something away from the character of a book, especially a well-done hardback. Of course, what counts is the content, but the presentation counts for something too, I think.

...

it does have a browser, however, the screen refresh is so slow that I'd only really consider using it for reading web pages - wikipedia, say, rather than doing much with it.

Why the Kindle Is Losing Me

I really loved my Kindle when I first got it. I love writing books, and I’m for anything that helps people consume and purchase more of them– I don’t care if I make a fraction of the royalties off electronic sales.

I was especially struck by how much I wished I’d had a Kindle in college. As a literature major I read about five books a week, not to mention all the textbook reading for other courses. There were so many great touches in the UI that elevated the experience from just putting a book on a screen. There’s the Kindle store and its friction-free, one-click purchases from anywhere, say, a cafe the night before the exam when you still haven’t bought the book. There’s the freedom from lugging around a heavy backpack of books. And there are so many features that are designed specifically for collegiate reading like the ability to easily highlight, annotate, store those annotations in a specific file, and be able to easily search around within the book and find certain quotes or passages. I thought, this isn’t a beautiful piece of hardware, but it is clearly designed by someone who knows high-volume readers.

The I heart Kindle quickly deteriorates into diatribe on the lack of page numbers and the resulting difficulty in creating footnotes for the book she is working on.

So how the hell is it possible that the Kindle doesn’t have a feature as obvious as page numbers? You know what happens when you don’t have page numbers? You can’t do a basic footnote for anything you’ve read. Yeah, that’s going to be a slight problem for the college market.

...

footnotes from a Kindle edition have been a nightmare. I have had to either use Google books to find page numbers or, worse, repurchase them in hard cover just to do footnotes.

...

Amazon needs to fix this now.

Great Resource for Network Mapping with Nmap

Nmap (“Network Mapper”) is a free and open source (license) utility for network exploration or security auditing. Many systems and network administrators also find it useful for tasks such as network inventory, managing service upgrade schedules, and monitoring host or service uptime.

Apps and the Value of a Converged Device

23 devices my iPhone has replaced:

I started thinking about what a converged device the iPhone is and compiled this impressive list of devices I used to carry that are now replaced by my iPhone. This is an unprecedented level of convergence if you ask me. A quick informal tally shows that the iPhone is replacing $2700.00 dollars worth of equipment and several pounds worth of gear.

- Blackberry

- Phone

- iPod

- Nuvi GPS

- Sirius portable player

- RSA SecureID

- eBay/PayPal SecureID

- WiFI SIP phone

- PSP

- Nintendo DS

- Digital camera

- Flip Video Camera

- >WiFi signal locator

- Amazon Kindle

- Police Scanner

- Radio

- Travel Alarm Clock

- Portable TV

- Portable Voice Recorder

- Calculator

- Compass

- White noise machine

- USB Key

Saturday, December 04, 2010

WiMAX Router with Integrated

Not sure where the nearest Clear store is, but this is nice!

Clear launches new at-home WiMAX router with integrated WiFi:

Hey, don't knock the naming engineers -- "Clear Modem with WiFi" just works. Indeed, that's the official title of Clear's new at-home WiMAX modem (the same one that flew through the FCC back in September), designed to bring the 4G superhighway into one's home for as little as $35 per month. According to the operator, it's an all-in-one solution that's "around the size of a book," offering 4G reception as well as an internal 802.11b/g/n router to distribute those waves across your home without the need for a separate WLAN router. It's available today from your local Clear store, with a $120 outright price or a $7 per month lease rate.

Friday, December 03, 2010

IT Salaries

The following list of IT salaries illustrates some overall trends. An IT project manager with more than 7 years of experience earns (on average) in the $100k range, while that same pro with just 2-4 years under their belt pulls in about $70k. An IT staffer who works for a big outfit makes more than his small business counterpart (for instance, an Enterprise Infrastructure Manager makes $108k). IT security professionals are perennially in demand, so the $102k annual salary for IS Security Managers is no surprise. And thought leaders, the people who actually envision IT systems, do quite well, hence the $108k for Systems Architects.

As for developers, their salary levels range wildly, from a newbie client server programmer, $54k, to an advanced Operating Systems Programmer, $105k. (Naturally location makes a big difference as well, with developers in big cities making far more).

Acer's Tablet

Acer's Tablet Play: 10 Reasons It's a Threat to Apple, Samsung:

1. Its computing business is growing

2. It has both screen sizes covered

3. Windows and Android is a good combination

4. Acer gets design

5. It's waiting

6. Its tablet capitalizes on gaming

7. It has the enterprise possibility

8. The connectivity is there

9. The 10.1-inch model has entertainment covered

10. Its history says so

Advice for New Faculty

- Find places to work: home, the library, the coffee shop, whatever.

- Say “no” to things.

- Don’t be afraid to do a bad job on things. [you need to read his explanation]

- Find ways to keep teaching fun.

- Stay in shape.

- Keep doing technical projects.

- Stop caring about individual papers and proposals.

- Stop caring too much about tenure and just get work done.

Thursday, December 02, 2010

How to Destroy Your Corporate Brand

How a capacitor popped Dell's reputation:

From 2003 to 2005 Dell shipped 11.8 million PCs with a known defect but chose not to fully disclose the situation to all of its customers. The inside details of its management decisions, unsealed after settlement of a lawsuit, and the consequences of its customer service blunders, appeared side-by-side in two New York Times stories recently.

Dell finally settled the lawsuit in September and its enterprise business has recovered. But the consumer side is struggling, in part because of what one analyst called "lingering" negative perceptions of its customer service.It seems Dell mishandled the situation at virtually every opportunity.

- misread the severity of the problem,

- slow to act,

- focused on minimizing financial damage, rather than brand damage,

- treated large customers better than average consumers.

At first Dell underestimated the scope of the problem, which it tracked back to inferior capacitors that could lead to a catastrophic failure of PC motherboards. Dell grossly underestimated failure rates. Early on it expected rates as high as 12%, which is bad enough. But even as it revised estimated failure rates drastically upward, Dell continued to ship PCs with the defective capacitors as it struggled to identify the components and remove them from its supply chain.

Dell kept production moving and revenues coming in in the short term, but it also dramatically increased its liability and damaged the company's reputation for quality.

Failure to be proactive

Rather than be proactive, Dell's strategy was to "fix on fail." Even as some frustrated customers reported failure after failure and rumors began to circulate, for the vast majority of customers Dell did not choose to proactively replace or repair machines known to have the defective component.

As the scope of the crisis became clear, with at least one volume customer reporting failure rates exceeding 20 percent, and with its its own estimates projecting potential failure rates of of 45 to 97 percent, management made a series of decisions that appear to have been carefully calibrated to minimize Dell's financial exposure and avoid a full recall.

The PC maker began proactively replacing PCs with the defect, but only for its large customers that made volume purchases, and then only after the customer experienced a failure rate exceeding 5%. It also triaged customers into three categories: Those who might move their accounts over the issue, those who might cut back on purchases over the problems - and everyone else.

Solar Energy in the US

From what_if_solar_was_subsidized_like_fossil_fuels

- Posted using BlogPress from my iPad

Predictions for RIM/Blackberry

The Death of RIM

If one function devices could continue to triumph, the iPod would not be supplanted by the iPhone.

A friend just asked me if I was on BBM. That’s BlackBerry Messenger for the uninitiated. And many may never be initiated, because BlackBerry is so 2001. Or 5. Or maybe even 6 or 7, but certainly not 11.

BlackBerries do one thing incredibly well, process e-mail.

And that’s it.

- Posted using BlogPress from my iPad

Wednesday, December 01, 2010

Is This Beginning of the End of Net Neutrality?

OpenDNS was founded in 2006 and quickly made a lot of fans around here due to their fast, reliable DNS servers and DNS services. It has been a profitable business; in 2008 it was estimated that OpenDNS generates a whopping $20,000 per day off of their DNS redirection relationship with Yahoo. Every DNS outage over the last four years effectively acted as an advertisement for OpenDNS, and the company has grown substantially -- now serving roughly 20 million users.

As ISPs slowly discovered that OpenDNS was eroding a possible revenue stream (redirection portal ads), many ISPs rushed to offer DNS redirection systems of their own -- with various degrees of success.

...

Some ISPs may now begin taking a harder stance, if this Washington Post interview with OpenDNS founder David Ulevitch is any indication. According to Ulevitch, OpenDNS is being blocked by Verizon Wireless already, and Ulevitch is concerned that a growing number of landline broadband ISPs are going to begin doing this as well to protect their coffers:

They do not want to see DNS traffic, queries and data streams leaving them. In the way we have monetized domain name services, they want to do what we do. But they aren't an opt-in service like we are, and they can also block us, which is what we are concerned about. And they try to block us in crafty ways. There was a report of an ISP working group whose members said that for "security" reasons, they should block alternative DNS services.

This raises an interesting neutrality situation we'll need to keep an eye on, given that ISPs who block OpenDNS will certainly try to insist it's about security, not money (and will certainly have cherry picked data pie charts to "prove" it). Granted, OpenDNS could just do what companies like Skype and Google have been doing recently -- and throw their neutrality principles out the window in exchange for large bags of money and exclusive service partnerships.

- Posted using BlogPress from my iPad

Innovative Use of the iPad

iPads Help FareShare Rescue Good Food from Landfill

"Approximately 28,000 supermarkets worth of food goes to landfill every year in Victoria," suggests FareShare CEO Marcus Godinho. FareShare is a food rescue service in Melbourne, Australia, who were a joint winners of the 2010 Premier's Sustainability Awards. So far this year they've saved 468 tonnes of food that would've otherwise been sent to landfill. Then 3,000 volunteers have cooked 1,114,461 meals for the needy, and doing so FareShare has supported 130 charities.

As those impressive figures suggest, this is no ordinary do-gooder group, but a well-oiled, switched-on, dynamic organisation. Their recent adoption of Apple iPads to streamline operations further distances them from the quaint, fuddy duffy soup kitchens of old.

Harnessing Technology

Using an iPad application developed by staff and volunteers, IT Wire reports that FareShare is now able to alert the kitchen's chefs of what food ingredients have been collected in real time, increasing their capacity to prepare free nutritious meals for charities. IT Wire notes the app also reduces the charity's paper trail, improves monitoring and reporting, and increases efficiency.

- Posted using BlogPress from my iPad

Tuesday, November 30, 2010

Safety of Backscatter Body Scanners?

Molecular biologist Jason Bell:

According to the TSA safety documents, AIT uses an 50 keV source that emits a broad spectra (see adjacent graph from here). Essentially, this means that the X-ray source used in the Rapiscan system is the same as those used for mammograms and some dental X-rays, and uses BOTH ‘soft’ and ‘hard’ X-rays. Its very disturbing that the TSA has been misleading on this point. Here is the real catch: the softer the X-ray, the more its absorbed by the body, and the higher the biologically relevant dose! This means, that this radiation is potentially worse than an a higher energy medical chest X-ray.

(Via Ben Brooks.)

Online Journalism Described

Via vanderwal's brain bits

ilovecharts:

via Kurt White

(via stoweboyd)

- Posted using BlogPress from my iPad

Biotech Hard Drives?

Bioencryption can store almost a million gigabytes of data inside bacteria

A new method of data storage that converts information into DNA sequences allows you to store the contents of an entire computer hard-drive on a gram's worth of E. coli bacteria...and perhaps considerably more than that.

What's the potential?

The possibilities of this biotechnology are truly amazing. A single gram of E. coli cells could hold up to 900,000 gigabytes (or 900 terabytes) of data, meaning these bacteria have almost 500 times the storage capacity of a top of the line commercial hard drive.

- Posted using BlogPress from my iPhone

Monday, November 29, 2010

What to Do About Struggling Computer Science Programs

Eliminate the Computer Science major: I believe we should do away with Computer Science as a field of undergraduate study

until you read the rest of the thought - at least, the way it's implemented right now.

Morris considers other majors Geology, Biology, Art and the subsequent careers geologist, biologist, artist and wonders why there's a disconnect between the Computer Science major and a programming career.

Morris sees computer science as theoretical and involving

complex math and theory that is useful and interesting and takes a sharp mind to fully comprehend;

Unfortunately,

most undergrads don't care and increasingly are not being exposed to it. Instead, popular languages are being taught in undergraduate courses, with the intention of preparing students for future work (see Joel Spolsky's The Perils of JavaSchools for another take on this phenomenon.)

Morris doesn't think students in these programs are learning programming, instead,

Students with a passion for programming study it in their free time, work on their own projects, and do most of their learning outside of the classroom. Fresh graduates with a BS in Computer Science and no real experience programming just don't have the skills they need to do anything but grunt work.

Morris assesses the state of computer science programs and proposes his own remedy:

Solutions thus far have been to dumb down the CS degree by using higher level ("easier") programming languages and teaching less theory and advanced math. I think that aiming to dumb down the curriculum in order to prepare students for a corporate programming career is killing off the pool of intelligent academic computer scientists. The solution? Accept that there's a difference and offer two distinct majors: Computer Science and Programming [He later amends the post to note that Software Engineering is a better term than Programming].

According to Morris, "programming students would learn the basics they need to succeed in the corporate world," "while smart CS students wouldn't be hampered by the dumbing down of their programs of study." There would be negative consequences as well, including "a huge shift of students from CS to Programming," smaller Computer Science departments, and potentially the closing of "some CS departments."

Innovation, Monopolies and Fear of Disruptive Technologies

How Ma Bell Shelved the Future for 60 Years:

How Ma Bell Shelved the Future for 60 Years:What would the world be like if fiber optic and mobile phones had been available in the 1930's? Would the decade be known as the start of the Information Revolution rather than the Great Depression?

The Great Bell Labs

In early 1934, Clarence Hickman, a Bell Labs engineer, had a secret machine, about six feet tall, standing in his office. It was a device without equal in the world, decades ahead of its time. If you called and there was no answer on the phone line to which Hickman's invention was connected, the machine would beep and a recording device would come on allowing the caller to leave a message.

The genius at the heart of Hickman's secret proto–answering machine was not so much the concept- perceptive of social change as that was-but rather the technical principle that made it work and that would, eventually, transform the world: magnetic recording tape.So what happened to magnetic recording tape in the 1930s? Why did't we get magnetic storage in the 1930s?

What's interesting is that Hickman's invention in the 1930s would not be " discovered" until the 1990s. For soon after Hickman had demonstrated his invention, AT&T ordered the Labs to cease all research into magnetic storage, and Hickman's research was suppressed and concealed for more than sixty years, coming to light only when the historian Mark Clark came across Hickman's laboratory notebook in the Bell archives.

"The impressive technical successes of Bell Labs' scientists and engineers," writes Clark, "were hidden by the upper management of both Bell Labs and AT&T." AT&T "refused to develop magnetic recording for consumer use and actively discouraged its development and use by others."One would ask WHY? The answer is not that surprising.

In the language of innovation theory, the output of the Bell Labs was practically restricted to sustaining inventions; disruptive technologies, those that might even cast a shadow of uncertainty over the business model, were simply out of the question.It's interesting to wonder whether Google, Apple, Facebook, and Microsoft have all either fallen or will fall into this same trap.

The recording machine is only one example of a technology that AT&T, out of such fears, would for years suppress or fail to market: fiber optics, mobile telephones, digital subscriber lines (DSL), facsimile machines, speakerphones - the list goes on and on. These technologies, ranging from novel to revolutionary, were simply too daring for Bell's comfort. Without a reliable sense of how they might affect the Bell system, AT&T and its heirs would deploy each with painfully slow caution, if at all.

Photo by jrmyst - http://flic.kr/p/gc6UF